Introduction

A neural processing unit (NPU) is a specialized chip designed to accelerate AI tasks on devices like phones, laptops, and edge systems. It is one of the key chips behind modern on-device AI features. If your phone can enhance photos instantly, translate speech in real time, or recognize your face without sending data to the cloud, an NPU is often part of what makes that possible.

Unlike a CPU, which handles general computing tasks, or a GPU, which excels at parallel processing for graphics and large compute workloads, an NPU is designed specifically for AI inference. That specialization allows it to run many machine learning tasks with better power efficiency, which is why NPUs matter so much in phones, laptops, wearables, cameras, and other edge devices.

As devices move toward more private, faster, and always-available AI features, understanding NPU vs GPU is no longer just for engineers. It helps explain why some devices feel smarter, respond faster, and use less battery while running AI features locally. This shift is closely tied to the rise of edge computing and on-device intelligence.

What Is a Neural Processing Unit (NPU)?

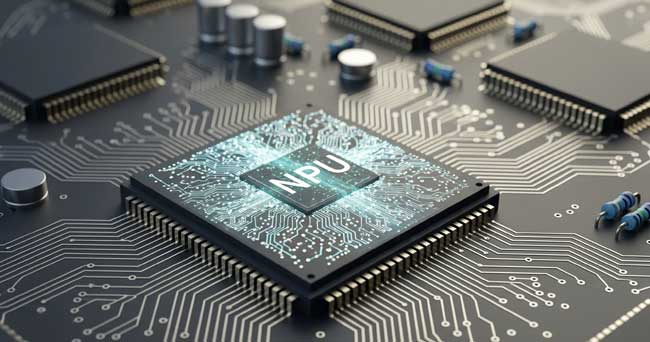

A neural processing unit is a specialized processor built to accelerate AI and machine learning workloads, especially neural network inference. In simple terms, it helps devices run trained AI models quickly and efficiently.

NPUs are optimized for operations common in AI models, such as matrix multiplication, tensor operations, and convolutions. Because the hardware is tuned for these patterns, an NPU can often deliver strong AI performance with much lower power use than more general-purpose chips.

That efficiency is what makes NPUs so important in battery-powered devices. Instead of sending data to cloud servers for every AI task, a device can process many tasks locally, improving speed, privacy, and responsiveness.

In practical terms, an NPU may power features like face unlock, live captions, voice assistants, camera scene detection, background blur, and real-time image enhancement, all running directly on your device.

NPU Meaning and Definition (Simple Explanation)

NPU meaning is simple: NPU stands for Neural Processing Unit. The NPU definition is a dedicated AI accelerator designed to run neural network inference efficiently, especially in devices where power, heat, and latency matter.

If you search for what is NPU, the short answer is this: an NPU helps your device run AI features faster and with lower battery use than relying only on a CPU or GPU for the same inference tasks.

NPU vs GPU: What Is the Difference?

Both NPUs and GPUs can run AI workloads, but they are designed for different priorities. A GPU is a powerful parallel processor that can handle graphics, scientific computing, and AI tasks at scale. An NPU is a more specialized AI accelerator focused on efficient inference in devices with strict power and thermal limits.

NPU vs GPU at a Glance

- NPU: Best for on-device AI inference, low power consumption, and real-time AI features in mobile and edge devices.

- GPU: Best for high-throughput parallel computing, graphics rendering, and many large-scale AI training workloads.

- CPU: Best for general-purpose tasks, system control, and mixed workloads, but usually less efficient for AI-heavy operations.

Why the Difference Matters

If a task must run all day on a phone, smartwatch, smart camera, or laptop without draining the battery, an NPU is often the better fit. If the goal is training large models or handling very large compute workloads in workstations and data centers, GPUs remain essential.

Many modern systems use a hybrid approach. They rely on GPUs for heavy development and training workflows, while NPUs handle fast local inference on user devices. This combination improves performance while keeping power usage practical.

Why NPUs Matter for On-Device AI

The biggest reason NPUs matter is that AI is moving closer to the user. Instead of sending every request to the cloud, devices can now process many AI tasks locally. This improves:

- Speed: Lower latency because data does not need to travel to remote servers.

- Privacy: Sensitive data such as voice, images, or biometric information can stay on the device.

- Battery life: NPUs are designed to deliver AI performance efficiently.

- Offline reliability: Some AI features can continue working without an internet connection.

This is one reason NPUs are becoming a core selling point in AI laptops, smartphones, and next-generation edge devices. They support a better user experience, not just bigger benchmark numbers.

Real-World Applications of NPU Processors

Smartphones and Tablets

NPUs in phones and tablets help run camera AI, face unlock, voice features, live translation, and image enhancement directly on the device. This reduces lag and improves privacy by limiting cloud processing for everyday tasks.

AI PCs and Laptops

Newer laptops increasingly include NPUs to accelerate on-device AI features such as background blur, eye contact correction, voice cleanup, transcription, and local assistant tasks. In mobile computing, NPU efficiency is especially important because thermal and battery limits are strict.

Smart Home and IoT Devices

Smart cameras, doorbells, and speakers use NPUs to detect objects, recognize events, and filter false alerts with lower latency. Local AI processing helps these devices respond faster and reduces constant data transfers.

Vehicles, Drones, and Industrial Edge Systems

In autonomous and industrial settings, AI decisions often need to happen in milliseconds. NPUs help support low-latency inference for tasks such as object detection, anomaly monitoring, route adjustment, and predictive maintenance in edge environments.

NPU Performance: What Actually Matters

When comparing NPUs, marketing numbers alone can be misleading. Metrics like TOPS (trillions of operations per second) are useful, but they do not tell the full story. Real performance depends on model type, memory bandwidth, software optimization, precision format, and thermal limits.

For everyday users, the most important NPU performance indicators are often practical outcomes: faster AI features, lower battery drain, smoother camera processing, better voice responsiveness, and reliable on-device AI behavior over time.

In other words, a higher TOPS number does not automatically mean a better experience. Efficiency, software support, and real-world optimization matter just as much as raw throughput.

Limitations of NPUs

NPUs are powerful, but they are not universal replacements for CPUs or GPUs. They are optimized for specific AI inference tasks, which means they may not be suitable for every workload. Large model training, general computing, and graphics rendering still rely heavily on GPUs and CPUs.

Another limitation is software ecosystem support. The usefulness of an NPU depends on whether developers and operating systems can target it effectively. Strong hardware with weak software integration often leads to underused AI features.

The Future of NPUs and AI Hardware

NPUs will likely become more common across phones, laptops, wearables, and industrial devices as on-device AI expands. The most important trend is not whether NPUs replace GPUs, but how AI workloads are split across CPUs, GPUs, and NPUs for the best mix of performance, latency, and power efficiency.

As AI hardware evolves, devices will continue to rely on specialized processors for different jobs. That makes the NPU vs GPU discussion less about winners and losers, and more about choosing the right hardware for the right AI task.

Conclusion

A neural processing unit is a specialized AI accelerator that helps devices run machine learning tasks faster, more privately, and with better power efficiency. While GPUs remain critical for many large-scale and high-performance workloads, NPUs are becoming essential for the on-device AI experiences people use every day.

If you are comparing modern phones, laptops, or edge devices, understanding the role of the NPU gives you a clearer picture of real AI performance, not just marketing labels.