Quantum computing’s future is exciting because it could change how we solve certain hard problems in science, security, optimization, and materials. It is also easy to misunderstand because quantum progress is often described with more drama than detail.

The most realistic future is not a sudden world where quantum computers replace every machine. It is a gradual future where quantum systems become useful for specific workloads, often working alongside classical supercomputers, AI accelerators, and cloud platforms.

This guide explains what to expect from quantum computing, where it may matter most, and what still has to be solved before it becomes a practical everyday technology.

Why Quantum Computing Is Different

Quantum computers use qubits instead of ordinary bits. Qubits can represent quantum states that allow certain algorithms to explore problem spaces differently from classical computers. The power comes from quantum behavior, but the weakness also comes from quantum behavior: qubits are fragile and easily disturbed.

That fragility is why error correction is such a big topic. A useful quantum computer needs not only many qubits, but high-quality qubits, low error rates, reliable control, and enough correction to keep calculations meaningful.

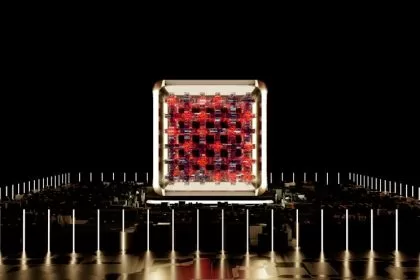

Near-Term Quantum Computers

Near-term quantum machines are often called noisy intermediate-scale quantum devices. They can run experiments and help researchers learn, but they are limited by noise and errors. Some may show advantage on narrow tasks, but broad practical value is still developing.

This stage still matters. Early machines help scientists test hardware, improve control systems, explore algorithms, and train a workforce. Many technologies look limited before the supporting ecosystem matures.

Useful Quantum Computing May Be Hybrid

The practical future may be hybrid: classical computers handle most work, while quantum processors handle selected subproblems. A cloud platform might send a chemistry, optimization, or sampling task to a quantum backend, then return results to a classical workflow.

This is similar to how GPUs support AI today. A laptop does not become a GPU, but it can use GPU acceleration for the right work. Quantum processors may become another specialized accelerator for problems that fit.

Where Quantum Could Matter Most

| Area | Potential value | Reality check |

|---|---|---|

| Cryptography | Forces migration to post-quantum security | Preparation is needed before large attacks exist |

| Chemistry | Models molecules and reactions more naturally | Hardware must become more stable |

| Materials | Could support batteries, catalysts, and superconductors | Lab discovery still needs manufacturing proof |

| Optimization | May help complex scheduling and search problems | Must beat strong classical methods |

| AI research | Sampling and quantum machine learning experiments | Still early for mainstream AI use |

Security Will Move First

Even before quantum computers become widely useful, security planning is already moving. Organizations with long-lived sensitive data need to prepare for post-quantum cryptography. That means finding where vulnerable encryption is used, updating systems, and planning migrations carefully.

This is closely related to quantum computing. The security impact is not just about future machines. It is about today’s systems needing a long runway to adapt.

Why Error Correction Matters

Quantum errors can come from heat, vibration, electromagnetic interference, imperfect gates, measurement problems, and interactions with the environment. Error correction uses many physical qubits to create more reliable logical qubits.

This is a major reason timelines are uncertain. Counting physical qubits is not enough. The more important question is whether the system can run long, accurate computations with corrected errors.

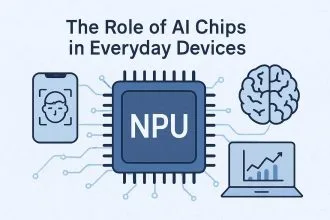

Quantum Computing and AI

Quantum computing may support AI in narrow ways, but it is unlikely to replace GPUs for mainstream AI soon. Today’s AI systems need huge memory bandwidth, mature software, and massive parallel computing. Quantum systems are not built for that general job yet.

The interesting future is more specialized: quantum methods for sampling, optimization, or simulating systems that are hard for classical computers. AI and quantum computing may also help each other, with AI improving quantum control and quantum research opening new data or material possibilities.

What Could Slow the Future Down

- Qubit quality and error rates.

- Cooling and control system complexity.

- Cost of building and operating machines.

- Shortage of practical quantum algorithms.

- Difficulty proving advantage over classical computing.

- Security migration complexity for old systems.

How to Read Quantum Claims

Ask whether a claim is about science, engineering, or business value. A scientific milestone may be important even if it is not commercially useful yet. A business claim should show a problem, a performance comparison, a cost argument, and a path to repeatability.

Also ask whether the result includes error correction, how many logical qubits were used, and whether a strong classical baseline was tested. These questions keep the excitement connected to evidence.

What a Realistic Timeline Looks Like

Quantum computing will likely mature in layers. The first layer is research value: better experiments, better control, and better understanding of algorithms. The second layer is specialized advantage for narrow problems. The third layer is broader commercial workflows where quantum processors become part of cloud and enterprise systems.

Those layers may overlap, but they should not be confused. A research milestone can be valuable even if it does not change everyday software. A commercial pilot can teach useful lessons even if it does not beat every classical method. A broad platform shift requires reliability, tooling, cost control, and enough trained people to use it.

Software and Talent Matter Too

Quantum hardware gets most of the attention, but software will decide much of the practical value. Developers need languages, compilers, error-mitigation tools, simulators, workflow integrations, and ways to decide whether a problem fits quantum computing at all.

Talent is another constraint. Quantum computing sits between physics, computer science, mathematics, and engineering. Companies that want to explore it need people who can translate real problems into forms that quantum algorithms might help with.

How Ordinary Users May Notice It

Most people will not own a quantum computer. They may notice quantum computing indirectly through better materials, stronger security standards, improved drugs, better batteries, or cloud services that use quantum acceleration behind the scenes.

That is normal. Most people do not own data center GPUs either, but those chips still shape the tools they use. Quantum computing may follow a similar path if the technology matures.

What Should Not Be Overhyped

Quantum computing should not be presented as a shortcut around every hard problem. It will not automatically make weak AI systems intelligent, poor security practices safe, or bad business models valuable. A useful quantum application still needs a problem that fits, a reliable machine, good software, and a result that beats classical alternatives.

This is why careful language matters. “Potential” is not the same as “ready.” “Promising” is not the same as “commercially proven.” A balanced view leaves room for both excitement and patience.

A good rule is to ask what changes for the user. If the answer is only “the technology is advanced,” the story is incomplete. Useful quantum computing should eventually improve a specific process, reduce a specific cost, or solve a specific problem.

Separate Quantum Potential From Practical Readiness

Quantum computing should be read in layers: research progress, narrow advantage, security migration, cloud access, and practical workflows. A milestone can be scientifically important without being ready for everyday software or business use.

For a simpler foundation, read quantum computing. For hardware limits and future materials, compare it with advanced chip technology. For AI hardware, use future AI processors.

Security note: this is general technology education, not a migration plan for cryptography, enterprise security, or regulated systems.

Use a Quantum Readiness Ladder

The future of quantum computing is easier to judge in stages. A lab demonstration, a useful noisy device, an error-corrected machine, and a business-changing system are not the same thing.

- Stage 1: research devices prove physics and improve control.

- Stage 2: hybrid workflows test whether quantum adds value beside classical computing.

- Stage 3: error correction makes longer, more reliable calculations possible.

- Stage 4: specific industries get repeatable advantage in chemistry, materials, optimization, or security planning.

This is educational technology context, not cybersecurity, investment, procurement, or professional IT advice.

- If your concern is online protection, connect it with future digital security.

Source note: for a plain technical baseline on quantum computing concepts, IBM’s quantum computing explainer is a useful reference.

Bottom Line

Quantum computing’s future is real but specialized. It may transform security planning, chemistry, materials, optimization, and parts of AI research, but it will likely work alongside classical systems rather than replacing them.

The next major breakthroughs will depend on better qubits, lower errors, practical error correction, useful algorithms, and honest comparisons with classical computing. The future is promising because the science is different, not because every claim is ready today.

Materials Limits Shape Quantum Computing Too

Quantum computing is often described through algorithms and qubits, but materials are part of the bottleneck. Stability, noise, fabrication quality, cooling, and device architecture affect whether a system can scale beyond demonstrations. That connects quantum progress with the broader story of element engineering and advanced materials.

Materials such as graphene can appear in future-computing discussions, but they should not be treated as a guaranteed shortcut. The useful question is what role a material plays in a specific design: sensing, interconnects, thermal behavior, substrates, or device stability.

If you want the current-state version before looking further ahead, start with the quantum computing in 2025 guide. It separates near-term reality from longer-term promises around AI, security, cloud access, and error correction.