AI felt like something out of a sci-fi movie, happening in giant server rooms or ‘the cloud’? Well, that’s rapidly changing. AI is no longer just living far away; it’s moving directly into the gadgets we use every single day. From your smartphone to your smart speaker, and even your car, devices are getting serious upgrades that let them handle complex AI tasks right then and there. This isn’t just a technical tweak; it’s a fundamental shift in how technology serves us, making our devices smarter, faster, and more private. We’re witnessing a quiet but powerful revolution where on-device AI acceleration is redefining what’s possible.

TL;DR

- AI is shifting from remote servers to local devices.

- Specialized chips called NPUs power this local AI.

- Benefits include speed, privacy, and offline functionality.

- Your smartphones, laptops, and cars are already using it.

- This makes daily tech smarter and more responsive.

- It’s a big step towards truly intelligent device performance.

The Shift to On-Device AI

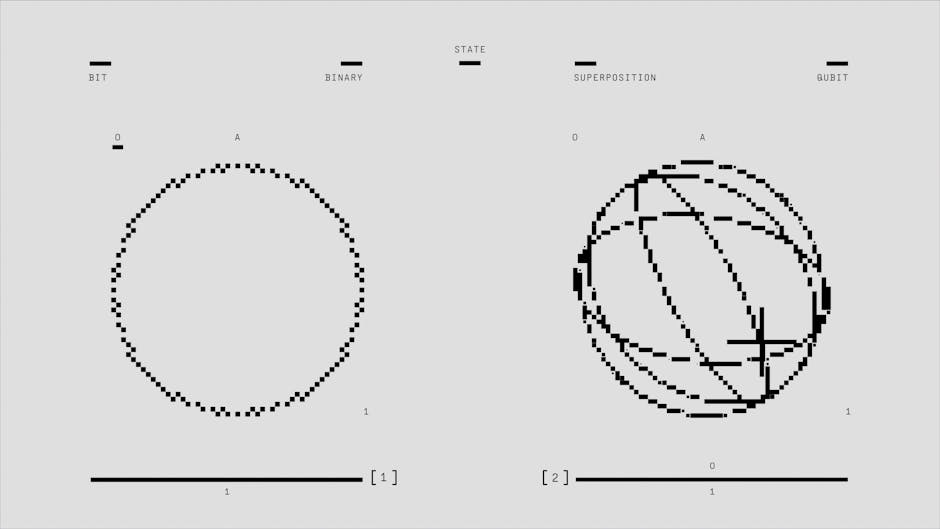

For years, when your device needed to do something smart – like recognizing faces in photos or giving you personalized recommendations – it would send that data off to a huge data center somewhere. This is what we call ‘cloud AI.’ It works, but it has drawbacks: it needs an internet connection, can be slow due to data travel, and raises privacy concerns because your information leaves your device.

Now, thanks to significant advancements in embedded AI chips, more and more of that heavy lifting is happening directly on the device itself. Think of it like a personal chef for your data, right in your kitchen, rather than sending ingredients to a central restaurant. This ‘Device AI Processing’ means faster responses, greater reliability, and enhanced privacy, as your data often doesn’t need to leave your gadget to be analyzed.

- Pro-Tip: Check your device’s specs for mentions of ‘NPU’ or ‘AI engine’ to see if it has dedicated hardware for on-device AI.

- Common Pitfall: Assuming all ‘smart’ features require an internet connection; many are now processed locally.

What Makes Devices Smarter?

The real muscle behind this shift comes from specialized hardware. We’re talking about things like Neural Processing Units (NPUs) and other dedicated AI accelerators. These aren’t your traditional computer processors (CPUs) or even graphics cards (GPUs), which have historically handled some AI tasks. NPUs are designed from the ground up to excel at the specific mathematical operations that AI, particularly machine learning, relies on. They can perform these tasks much more efficiently, using less power and generating less heat, which is crucial for battery-powered devices.

This next-gen NPU technology allows devices to run complex AI models with incredible speed. Imagine real-time language translation, advanced camera features that adjust settings instantly, or voice assistants that respond without a delay – all without sending a single byte of data to a remote server. This dedicated hardware is driving the wave of intelligent device performance we’re seeing today. Understanding NPUs can help you appreciate the tech behind your smart gadgets.

- Pro-Tip: Devices with a powerful NPU can often process voice commands or image recognition faster and more securely.

- Common Pitfall: Thinking an NPU is just another fancy CPU; it’s specialized for a very different type of computational task.

Real-World Impact: AI in Your Pocket and Home

So, what does this actually mean for you, the everyday user? A lot, as it turns out.

- Smarter Smartphones: Your phone’s camera uses AI to improve photos before you even press the shutter, recognizing scenes, faces, and adjusting lighting. Facial recognition for unlocking? That’s on-device AI. Predictive text and intelligent suggestions for apps? All powered locally, making your phone feel more intuitive.

- Private Voice Assistants: While some requests still go to the cloud, initial voice command processing – the ‘wake word’ detection and basic command understanding – increasingly happens right on your smart speaker or phone. This means it’s listening smarter, without constantly sending recordings of your daily life over the internet.

- Better Laptops: Modern laptops now include dedicated embedded AI chips. This improves everything from video conferencing (blurring your background, eye-tracking features) to extending battery life by efficiently managing AI-powered tasks.

- Safer Cars: Advanced driver-assistance systems use on-device AI to interpret sensor data, recognize pedestrians, read road signs, and even predict potential hazards in real time. There’s no time for a round trip to the cloud when safety is on the line.

This push for on-device AI acceleration is making our gadgets more capable, responsive, and trustworthy.

Common Misconceptions

- On-device AI replaces the cloud: Not entirely. They often work together, with on-device AI handling immediate tasks and cloud AI managing more massive, complex computations or updates.

- It’s only for high-end devices: While top-tier gadgets lead the way, embedded AI chips are becoming standard in mid-range devices too, making advanced AI features more accessible.

- On-device AI is always faster: Not always, but it’s more consistent and often faster for specific, repeated tasks due to specialized hardware and lack of internet latency.

- It makes devices too expensive: The cost of these specialized chips is coming down, and the benefits in user experience often outweigh the marginal price increase.

Next Steps

- Check Your Specs: When buying a new device, look for mentions of AI accelerators, NPUs, or dedicated AI engines in the product description.

- Explore Settings: look at your current smartphone or laptop settings; you might find AI-powered features you didn’t even know you had, especially in camera or privacy sections.

- Stay Informed: Keep an eye on tech news to see how new generations of AI chips are pushing the boundaries of what our personal devices can do.

This content is for informational purposes only and not financial advice.